Greg Armstrong --

[Most recent update: July 2019]

Here's the question: Will bilateral aid agencies hold the multilaterals to account for results or not? UN agencies’ results reporting is inconsistent, and the results frameworks of SIDA, AUSAID and DANIDA remain ambiguous. This post reviews results frameworks from USAID, DFID, CIDA and EuropeAid.

[Most recent update: July 2019]

Here's the question: Will bilateral aid agencies hold the multilaterals to account for results or not? UN agencies’ results reporting is inconsistent, and the results frameworks of SIDA, AUSAID and DANIDA remain ambiguous. This post reviews results frameworks from USAID, DFID, CIDA and EuropeAid.

Level of Difficulty: Moderate to complex

Primarily useful for: Project managers, national partners

Length: 23 documents, 1,444 p.

Most useful: CIDA RBM Guide, DFID LFA Guide and EuropeAid Capacity Development toolkit

Limitations: Mounds of bureaucratic language in many of the bilateral documents make it difficult to identify and take effective guidance from potentially useful material.

Who these materials are for

Project managers, evaluators and national partners trying to understand how USAID, DIFD, CIDA and EuropeAid define results and frame their approaches to RBM.

Background: Ambiguous results chains at the UN, and some bilateral agencies.

In my previous three posts, I examined how vague UN RBM frameworks can provide the rationale for some agencies to avoid reporting on results, to focus instead simply on describing their completed activities, and how similar ambiguities in the results frameworks and definitions from AusAid, DANIDA and SIDA , would make it difficult for them to hold the UN agencies to higher standards of results reporting. The fourth and final post in this series briefly surveys how the results frameworks of four more bilateral agencies compare to those of the OECD/DAC and UNDAF. This review is only, as I noted in past posts, of those agencies where information, in English, could be obtained in reasonable time from their own or associated -- and publicly accessible -- websites. For those who want more detail, links to the relevant donor agency RBM sites can be found at the bottom of this article.

I am proceeding from the premise, again, that “cause and effect” is not a reasonable description of what is intended by a results chain, but rather that it is a notional concept of how activities can contribute to intended results.

The USAID Results Framework

Most useful: 29 pages of links and references in the Guide on Planning.

Despite its commitment to improved knowledge management, noted in the OECD DAC peer review of the United States [99 p.], last conducted in 2006, USAID remains, at least at this writing in 2010, one of the most difficult of the bilateral agencies for which to find clear RBM guidelines. USAID has also recently come under criticism for its severe editing of reports from collaborating partners.

And it is not just USAID itself which is difficult to understand, but to some extent also organizations such as the Millennium Challenge Corporation, which, again at this writing in 2010, has a largely unintelligible list of indicators for what it calls results – listing the number of farmers trained, or millions of dollars in irrigation contracts funded--in other words, completed activities--rather than listing the changes (results) these activities lead to. If you can make sense, for example, of the Tanzanian results table, you are smarter than I am, or at least you have a lot of free time, or both. [Editing note, January 2012: That results table is, as of January 2012, no longer available, and a greater emphasis now appears to be put on longer-term results, and impact evaluations at the MCC website. But, that is a review for another day.]

Among the hundreds of documents on the USAID website, there are only a few which have any kind of clear results definitions and those definitions are buried deep in bureaucratic jargon.

The tasks for USAID are, of course, complicated by the integration of its work planning with that of the State Department, and the added complexities of integrating humanitarian assistance with military operations – with all of the ambiguity that creates about the nature of results.

The USAID Guide on Planning [77 p.], updated in 2010, presents its Results Framework as moving from activities to Outputs, to Intermediate Results, and finally to Assistance Objectives -- what other agencies might call Impacts or Ultimate Outcomes. So, the USAID results chain would appear to look like this:

Activities→Outputs→Intermediate Results→Assistance Objectives

The 2006 DAC peer review noted that the USAID reporting system

“…focuses mainly on “physical deliverables” (e.g. numbers of schools, numbers of clinics, etc.). With the new orientation in US foreign policy, there is an opportunity to measure development assistance performance more in outcomes than in physical deliverables.”

By 2010, some progress may have been made on this. The Automated Directives System 200 USAID Introduction to Programming Policy [ 71 p.], revised in 2010 defines an Output as:

“A tangible, immediate, and intended product or consequence of an activity within USAID control. Examples of outputs include people fed, personnel trained, better technologies developed, and new construction.” [p. 67]

This definition seems to clearly define Outputs as completed activities.

While the USAID “Guide on Planning” definition of Outputs differs from this slightly and leaves some room for possible results as “people able to exercise a specific skill, buildings built, or better technologies developed and implemented…”, it also notes that

“…it is important to understand the difference between Outputs and results….In differentiating outputs from results, it can be useful to think of results as developmentally significant changes that affect a broad segment of society, while outputs are lower-level steps that are essential in achieving these changes.” [p. 27].

A similar distinction between results and Outputs occurs in the USAID Introduction to Programming Policy where results are defined as:

“A significant, intended, and measurable change in the condition of a customer, or a change in the host country, institutions, or other entities that will affect the customer directly or indirectly. Results are typically broader than USAID-funded outputs….”[p. 70]

This suggest that simply reporting on completed activities or products would not be acceptable within the evolving USAID context. Given that the United States provides such a large percentage of the assistance available to agencies such as UNDP, it remains to be seen whether this focus on results has in any way been communicated to the UN agencies.

The DFID Results Framework

Length: 5 documents, 378 p.

Most useful: Guidance on Using the Revised LFA

The DfID results chain appears, from the documents I have seen, to look this way:

Activities→Outputs→Purpose→Goal

DFID in 2005 had in its DFID “Guidance on Evaluation and Review” for staff [87.p] a definition of Outputs similar to those of the OECD / DAC and UN:

“The products, capital goods and services which result from a development intervention; may also include changes resulting from the intervention which are relevant to the achievement of outcomes.”

While not easily available on DFID’s own website (this link comes from the Monitoring and Evaluations News archives), the 2009 DFID How-to Note: “Guidance on Using the Revised LFA” [34 p.] changed the definition of Outputs to focus on deliverables:

“Outputs are the specific, direct deliverables of the project. These will provide the conditions necessary to achieve the Purpose”.

Examples provided in this DFID LFA guide lead to the conclusion that there is still room for looking at Outputs in the DFID context, as both completed activities and as results.

For example – deliverables or completed activities:

“Output 1: All health professionals in selected Central and District Hospitals trained on revised curriculum for patient-centred clinical care “

Possible deliverable, but also possibly a near-term result:

“Output 2: In 4 target districts Ministry of Health professionals delivering all aspects of Primary Health Care (PHC) services in partnership with NGOs and Village Health Committees “

Definitely a longer-term result, probably at the Outcome or Purpose level:

“Output 3: Selected Central and District Hospitals achieving year on year improvements in national assessments of patient-centred clinical care “ [p. 12]

This is an unnecessarily confusing mixing of real results and completed activities, in the use of one term -- “Outputs”.

According to the DFID revised LFA guide, all DFID projects are now supposed to collect baseline data on indicators for Outputs, Purpose and Goal before approval [p. 33] and to assign to each Output a weight that will “provide a clearer link to how output performance relates to project purpose performance”. This suggests that while not completely responsible for achievement of results at the purpose level, project managers are expected to report on progress at that level and assess the continuing likelihood that Outputs are making a contribution to broader achievement of results.

Of course, the fact that this document is not easily available from DFID leaves open the possibility that the ideas in it may not be in universal favour within DFID.

But there are other documents which suggest that reporting on results, and not just on activities, is important to DFID. The 2010 synthesis review of 970 DFID Project Completion Reports [124 p.] ---which may have laid the groundwork for recent criticisms of DFID performance by the new British government by criticising the lack of assessment of how projects contributed to goals, did note that “The main concern in the [Project Completion Report] process is to assess performance (achievement of the stated purpose),” [p. 36] This is clearly a focus on results, and not just on delivery of products, or completion of activities.

And as an indicator that the focus on results is being taken seriously at the political level, a recently leaked DFID memorandum on which programmes or projects should be cut, suggests that the government should be focusing on projects that can be defended “as outcome focused as possible, and will deliver value for money”, and that DFID “ will only judge ourselves against commitments and outcomes that we assess pass the fitness test.”

As Philip Dearden noted in a discussion about implementation of the new DFID logical framework on the Monitoring and Evaluation News website : “Its very important to remember that many DFID programmes are now spending huge amounts of money and we need to know what changes the money is actually going to bring about.”

The April 2010 DAC OECD peer review on British aid [130 p.] concluded that

“DFID has a strong results-based management framework, and this – combined with a purpose and performance-driven organisational culture and cohesion at the senior level – is important in ensuring effective delivery of the aid programme.”

And, even before the new British Government’s multilateral aid review, there were signs that DFID was concerned about results reporting in multilateral agencies. The 2009 Guide on Using the Revised LFA put it quite clearly:

“…DFID will have to work with the fact that multiple partners mean differences in terminology and approaches.

DFID has played a leading role in ensuring harmonisation of approaches, and is committed to continuing in this vein. However, it is important that in pursuing a harmonisation agenda, we do not relax our requirements for robust monitoring and evaluation tools.

Differences in language and approach should not be an excuse for gaps in information. In fact, the revised logframe format has already been used by DFID teams when negotiating with partners. DFID needs the information in the logframe in order to report to UK taxpayers that funds are being used in the best possible way and delivering measurable results.” [p. 16]

In part because of this, and with the UK review of aid which is about to begin, there can only be more pressure forthcoming on project managers under DfID funding to go beyond reporting on activities and products, and to monitor progress on results.

It will be interesting to see if this applies to multilateral agencies using DFID funds. [Update note, July 2018: The DFID Multilateral Aid Review was completed in March 2011. Both older and more recent bilateral peer reviews of DFID are available on the OECD/DAC website.]

It will be interesting to see if this applies to multilateral agencies using DFID funds. [Update note, July 2018: The DFID Multilateral Aid Review was completed in March 2011. Both older and more recent bilateral peer reviews of DFID are available on the OECD/DAC website.]

The Global Affairs Canada (CIDA) Results Framework

Note: See a more current and detailed analysis updated in the March 2021 review of GAC RBM Guides, which also includes the most recently updated links.

Length: 103 p.

Getting a clear idea of the CIDA Results-Based Management framework is relatively easy in comparison to some other agencies, as the CIDA RBM system, revised in 2008, is being implemented, and most of the definitions [were] included in 3 documents available online at the CIDA website [Edit: now the Global Affairs Canada development website] The most important of these was the CIDA RBM Guide [ This is now the primary RBM Guide for Global Affairs Canada "Results-based management for International assistance programming: A how-to guide" (PDF)].

In 2008, CIDA made changes to its results-based management policy, dropping the Log Frame (although not, as has been suggested, the Logical Framework Approach) and replacing it with a Logic Model The former CIDA results chain, in general use up until the end of 2009 looked like this:

Activities→Outputs→Outcomes→Impact,

The new CIDA results chain (which started implementation in 2010) is as follows:

Activities→ Outputs→Immediate Outcomes→Intermediate Outcomes→Ultimate Outcomes.

There have also, as a quick glance at these two will show, been changes to the RBM terminology CIDA/Global Affairs Canada uses.

In the past CIDA regarded Outputs as near-term results -- for example, changes in understanding arising out of training activities. But, as this document noted “given the almost universally accepted definition of “outputs” by donors, OECD DAC, and [The Canadian Treasury Board Secretariat] as products or services, it is necessary to readjust CIDA’s former term [for near-term results] to “immediate outcome.”

The change is useful because it makes a very clear distinction between completed activities and results. But it is based on the misconception that other donors in fact do have a clear definition of Outputs as completed activities, and do not permit results to be included in the term “Outputs”. This is something that in practice is clearly not the case for many UN agencies, for SIDA, AusAid, DANIDA, USAID and even for DFID.

The [current} CIDA/Global Affairs Results-Based Management framework, like most other current aid agency documents now defines Outputs as “Direct products or services stemming from the activities of an organization, program, policy or initiative”.

It is worth noting, however, that “changes” are not included in the definition of "Outputs", and one explanation of why Outputs have been redefined as products and completed activities is that the new definition, as a previous CIDA document explained

“Clearly splits development results from products and services (outputs). This distinction should strengthen performance reporting by partners, given that it is now clear they will have to report on both outputs and outcomes”

The examples provided in [both past and current] CIDA/Global Affairs Canada Results-Based Management publications also make it clear that Outputs are completed activities or products, but not results. For example,

Activity: Build wells

Output: Wells built

Outcome: Increased access to water

Activity: Develop and deliver training on well maintenance

Output: Training on well maintenance developed and delivered

Outcome: Increased ability to maintain wells.

Results in the CIDA/Global Affairs Canada context are now described this way:

“Results are the same as outcomes. An outcome is a describable or measurable change that is derived from an initiative's outputs or lower-level outcomes. [ p. 8]

- Immediate Outcomes are near-term results phrased as changes – increases in understanding, skills or access.

- Intermediate Outcomes are mid-term changes, “expected to logically occur” within the life of a project, if Immediate Outcomes are achieved, including things such as increased use of clean water, or improved trust in government.

- Ultimate Outcomes are hoped for long-term changes, the justification for the project, but unlikely to be achieved during the life of a project. These refer to things such as improved health status, or reduced vulnerability of children in conflict areas.

These results categories are illustrated in much more detail in the Global Affairs RBM Guide.

The 2007 OECD DAC peer review of Canada’s aid programme [107 p.] noted that the Results-Based Management and internal audit processes in CIDA were:

“...cumbersome, with limited differentiation in the indicators required and the processes involved for large and small programmes. While this helps to compare results among different activities, efficiency is compromised. The system might also be used to justify risk aversion rather than risk management, especially in those areas where it is more challenging to articulate measurable results (e.g. in governance)” [p. 49]

The OECD/DAC review also noted the relatively strict CIDA application of RBM to small agencies:

“ For example, an application for a small workshop organised by an NGO in Canada has to set out development results as if it were equivalent to a major bilateral programme in a partner country, with requirements to provide an impact evaluation. While providing discipline for NGO proposals may appear reasonable in theory, the practice can appear unnecessarily burdensome to the applicant.” [footnote 37]

The new CIDA/Global Affairs RBM system is unlikely to relieve small agencies of the requirement to justify activities in terms of results, but with its simpler approach to visualising results in a Logic Model, it may be an attempt to address the issues raised by the peer review, about the unwieldy nature of the process, and to make the process of identifying results more intuitive.

While the current CIDA/.Global Affairs results framework is clear and logical, there are, of course, questions about whether it will actually be implemented, not just in plans, but in reports from project managers that are clearer and more useful than those produced before the new framework was put in place. CIDA projects have often had real difficulties in producing baseline data, and it will be interesting to see if the new framework stimulates more attention from Global Affairs Canada's own managers and from project directors, to this important element of Results-Based Management.

It will also be interesting to see if CIDA applies its standards on accountability and results reporting to multilateral agencies.

It will also be interesting to see if CIDA applies its standards on accountability and results reporting to multilateral agencies.

Update: CIDA is now Global Affairs Canada, readers may want to refer to the updated review of the most recent Global Affairs Canada RBM guide.

The EuropeAid Results Frameworks

Length: 10 documents, 710 p.

Most useful: Results Oriented Monitoring Handbook

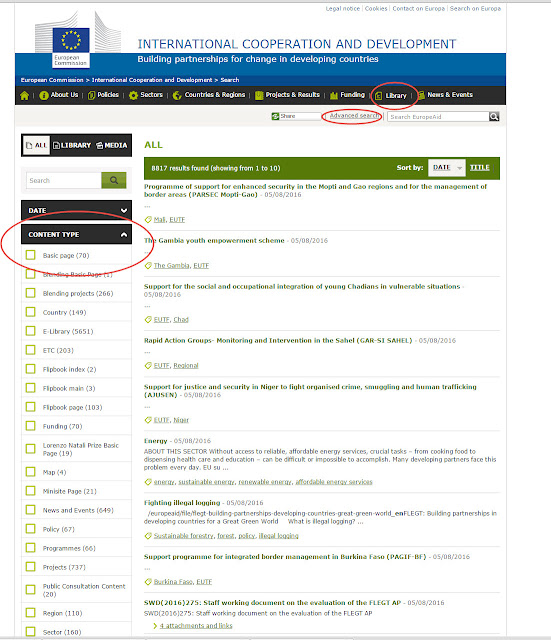

[2016 Edit: Many of the EuropeAid documents have moved, but if readers use the "advanced search" tool in the "Library" tab, and narrow the search further by opening the "categories tab under that, it is possible to still find many, although not all, of the documents referred to below]

Despite the obvious inference that the EU aid programme could be treated as a multilateral exercise, for the purpose of this discussion, I am treating EuropeAid as a bilateral agency. But, of course, EuropeAid itself has to account for the multiple results frameworks of its component members, and as the 2007 OECD DAC peer review of the European Union's aid programme [114 p.] noted,

"Because the Community functions both as a donor agency and as a multilateral recipient of Member State funds it is understandable that it does not allocate a large proportion of its funds to other multilateral institutions...." [p. 42]

But because it works with a multitude of member countries, it has to work with differing perspectives on results, and this is reflected in the many different results chains in EuropeAid and related documents, five of which are discussed here.

The EuropeAid site has a few pages which summarize a lot of data. This included [Edit: in the past] the EuropeAid glossary of terms related to results-based management in which the EuropeAid results chain takes this form:

Activities→ Outputs→Results→Impact

The EuropeAid glossary of RBM terms makes it clear that it neither defines, nor uses, the term “Outcome”. It simply refers to results.

EuropeAid Outputs are defined as “Goods and / or services produced / delivered by the intervention (e.g. rehabilitated road).”

EuropeAid Results are defined as “Initial change attributable to the intervention (e.g. reduced transport time and cost)” leading to

EuropeAid Impacts, defined as “Further long term change attributable to the intervention (e.g. development of trade)”.

EuropeAid Impacts, defined as “Further long term change attributable to the intervention (e.g. development of trade)”.

The EuropeAid guide on Evaluation Methods [97 p.] provides examples for Outputs such as “teachers trained” or “”advice provided to groups of farmers” and for results, such as “girls benefitting from increased access to education” or “new breeding practices that prevent desertification”.

Both of these examples, among others, indicate a clear difference in this EuropeAid framework between Outputs as completed activities, and results.

But other documents, such as the EuropeAid Results Oriented Monitoring Handbook [Edit: in the 2009 version, at least] clouded the picture on how “results” are defined. The 2009 version of the handbook noted, for example that

“Monitors have to fully understand the concepts and terminology used in ROM and to apply them in the correct and coherent manner. This is specially true for ‘efficiency’, ‘effectiveness’, “outcomes’ and ‘outputs’ as these terms might be used differently in other management and M&E systems.” [ p. 48]

The irony is that this document did use some words differently than those defined in the EuropeAid Glossary. While the Glossary refers only to Outputs, Results and Impact, distinguishing between Outputs and Results, the Handbook on Results Oriented Monitoring said of Outputs that they are:

“the goods and services produced; e.g. children vaccinated. In the EC’s Logframe structure these are referred to as ‘results’;” .[p. 29].

The EuropeAid Glossary specifically avoided including Outputs in results, but notes that other EC documents such as the Handbook, may use the term “... in the wider sense.”

The EuropeAid Results Oriented Monitoring Handbook is, nevertheless, an interesting document, and one which is updated regularly. It appears to be less a guide to using RBM in management, than a detailed guide to managing the monitoring process, describing a very systematic and detailed framework for monitoring which goes far beyond completion of activities, and includes assessments of Outcomes, even Impacts, and the acquisition of information from stakeholders on relevance, effectiveness and sustainability of results. And within this document there is a distinction between

- EuropeAid Outputs as completed activities (eg. Training sessions”),

- EuropeAid Outcomes as intermediate results (“improved capacity of those who attended the training”) and

- EuropeAid Purpose, or longer-term results, (“improvements in area of intervention due to the improved capacity of the target group”) [p. 65], which are the “specific, central highest ranking objective of the project” [p. 71], the highest level on which a project reports, but not necessarily the highest level on which it is monitored.

- EuropeAid Impact as the overarching result to which a project may contribute, and justification for a project.

In answer to the question “How well is the Project achieving its planned results?” the Results Oriented Monitoring Handbook said in the 2009 version ‘It is crucial to understand that effectiveness in this part is concerned with outcomes, not with outputs (tangible goods and services).” [p. 71]

There is a clear, and logical results chain here then, and this is reflected in the differentiation between Outputs and changes -- as results -- in the detailed Background Conclusion Sheet [p. 65-74].

Activities→Outputs→Outcomes→Purpose→Impact

The EuropeAid site website’s section on results monitoring , also has 6 very short synthesis reports on results, the most recent of which is for projects in 2007, but none of these documents makes it clear whether the monitoring is focusing on results as changes, or whether this is referring to completed activities or products. There are references to “impacts” but only as potential results, so it is not clear from any of these short reports what “results” are actually being achieved. Presumably the more detailed reports would clarify this.

But also [Edit: in recent years] EuropeAid has put together, in its Tools and Methods series, two interesting, and potentially very useful documents suggesting that for capacity development EuropeAid is indeed focused on results. These include the March 2009 EuropeAid Guidelines on Making Technical Cooperation More Effective [138 p.], and the EuropeAid Toolkit for Capacity Development [82 p.] Both of these discuss a results chain, for technical cooperation, focused on capacity development in which the attention to results, not just to completed activities or delivery of products is clear. The Toolkit for Capacity Development presents the results chain for capacity development interventions this way [p. 68-70]:

[Edit: Many of these documents are in 2016 difficult to find, but there are an increasing number of other guides and briefing notes in the EuropeAid international cooperation and development library]

Activities→sector capacity (capacity development outputs)→sector outputs (capacity development Outcomes)→Sector Outcomes→Sector Impacts

In this context as the Guidelines on Making Technical Cooperation More Effective say, “…logical frameworks for “[Capacity Development Technical Cooperation]” need to focus

on outputs and outcomes beyond the immediate deliverables by TC;” [p. 29]

It is clear from the discussions in these two guides that the sector capacity or capacity development outputs are real changes in the ability of host government agencies, for example, to deliver services more effectively. These then, whatever they are called, are results. These Guides themselves refer to the 2007 Guide on Support to Sector Programmes [119 p.]. That Guide uses the simpler results chain of

Activities→Outputs→Outcomes→Impacts,

Outputs in this document are clearly completed activities. [p. 89]

There are other, more complex, results chains referenced in five working papers on results indicators on transport, education, water and sanitation, health and agriculture, all produced in 2009, suggesting a 6-stage results chain:

Activities→Outputs→Outcomes→Specific Impacts→Intermediate Impacts→Global Impacts.

This is focused at the agency level, rather than the project level. These guides too, however, make it clear it is change that must be followed, not just completion of activities.

The European Community's aid volume is huge. As the 2007 OECD DAC peer review noted the "volume of Community ODA alone is larger than that of the World Bank’s International Development Association and several times that of the United Nations Development Programme" [p. 12] and much of it is administered or influence by the work of EuropeAID. EuropeAid should, therefore, have a very big influence on how multilateral aid agencies treat results reporting.

If the EuropeAid Glossary of RBM terms definitions, and the types of capacity results described in the 2009 EuropeAid Tools and Methods guides are used, there is a clear distinction between results and completed activities. It is also clear, despite labelling Outputs as results in the Results Oriented Monitoring Handbook, that the approach described there clearly focuses on, and in theory at least, takes the monitoring of results, not just activities, seriously.

The bottom line: Holding UN agencies to account

Despite anecdotal evidence that they are themselves trying to report on results, given the ambiguity of their own definitions of results SIDA, AusAID, DANIDA would have some difficulty in holding UN agencies to higher RBM reporting standards.

On the other hand, despite some inter-agency differences in terminology, there is now in the RBM frameworks of DFID, and CIDA, in EuropeAid's specific focus on capacity development, and perhaps, if we can trust the definitions on its website, for USAID too, little of the confusion between completed activities and results that persists in the OECD DAC and UN definitions.

There is also in these bilateral frameworks, and despite some criticisms of how they work, little space or excuse for project managers to avoid taking responsibility for reporting not just on completed activities, but on results -- explicitly as those things that have changed after the guides are produced, the training completed, or the schools or health clinics constructed.

Given the inconsistent results reporting by UN agencies described in some of my earlier posts, even those bilateral aid organizations such as DFID, CIDA, EuropeAid or USAID which do, or purport to, hold their own projects to relatively high reporting standards, could be open to criticism for providing funds to UN agencies without requiring from them similar standards on reporting.

In this context, recent moves by DFID to assess multilateral aid could focus attention more clearly on the weak UN results culture, and how bilateral agencies deal with this.

Further reading on Results-Based Management at DFID, USAID, CIDA and EuropeAid :

- USAID policy documents

Edited to update links in March 2011, January 2012. and August 2016, July 2018, July 2019.

_____________________________________________________________